How "Force Fail" can help with false negatives in testing

ML revolutionizes testing, that makes self healing tests possible. But what happens if tests self healed incorrectly? You need to use “Force Fail.”

Machine learning revolutionizes testing, which makes features like self healing tests possible. But what happens if tests self healed incorrectly? This is where you need to use “Force Fail.”

What is a “false negative” in testing and when should you “force fail” tests?

Machine learning makes it possible for tests to “self heal,” which saves testers countless hours of test maintenance fixing broken tests. However, in certain circumstances this may result in “false negatives,” or tests that passed, when instead, they should have been considered failed. False negatives need to be corrected so that the machine learning model can learn from your tests and ensure that tests are passed or failed correctly when executed again in the future. This corrective action is called “Force Fail,” and this feature was released in Functionize 4.0,allowing you to manually fail tests that have passed.

What causes “false negatives” to occur in testing?

By far the most common reason for false negatives is failing to add proper verifications to your test. Remember, these assertions must be defined in advance so that the test can verify which behaviors are working properly in your application. If your test doesn't contain verifications, then it is just a step-by-step flow with no way for the system to know when things have gone awry.

What is an example of a verification step?

Let's say that you are testing a food delivery website. For your test, you would like the user to check out and select the delivery time for their food. One verification may be to confirm that the button to select the delivery time defaults to today's date. If you did not add this verification step when creating the test, then the test won't check the date during execution.

Why would a test self-heal when the test was supposed to fail?

Functionize's machine learning model solves the biggest pain point in test automation: fixing broken tests. So, Functionize tests always try to determine if a test failure is just due to changes in your application. As the test is executed, the machine learning algorithm will check every page to determine which elements to interact with.

If the element it was looking for is no longer found, it will identify the most probable alternative element on the page in an attempt to self-heal. Only verifications are excluded from the self-healing behavior. This is one reason why it is important to add verifications to your test to specify and enforce checkpoints.

Going back to our example — imagine your site logic changes to move the delivery time selection to the second page of the checkout. Because there isn't an explicit verification for the date field, the system might try to self-heal. For instance, it might choose the shipping method button as the most likely correct element. This self-heal causes the test to pass erroneously. You can easily avoid false negatives like this by making sure to include proper verifications in your test.

How can I “Force Fail” a test?

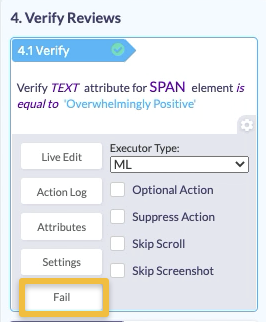

Whether a missing verification is the culprit for your false negative or some other reason, you now have the ability to “Force Fail” and intentionally mark a test as failed. To do this, navigate to the slider view of the action and click into the “Gear” icon for the specific step you want to fail.

Once you click on the Fail button, you can now see that the overall test is marked as failed as well.

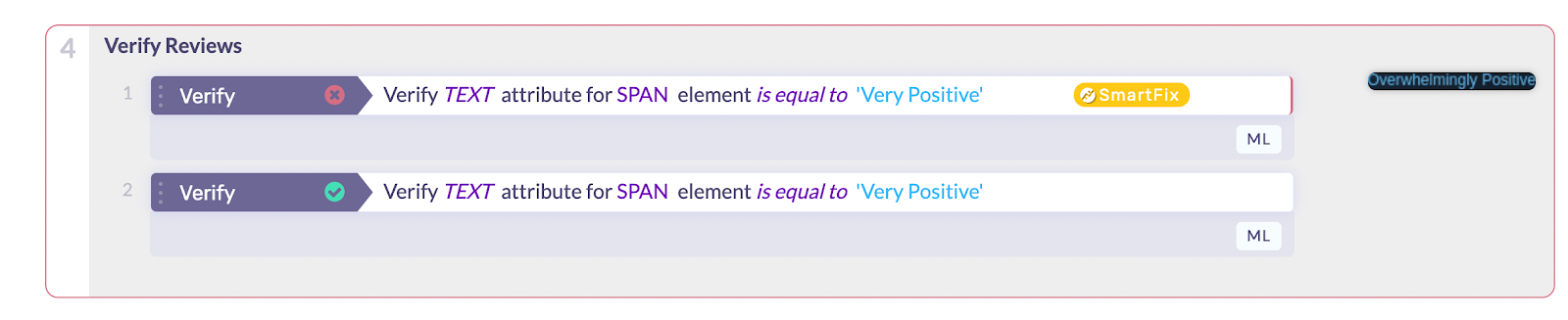

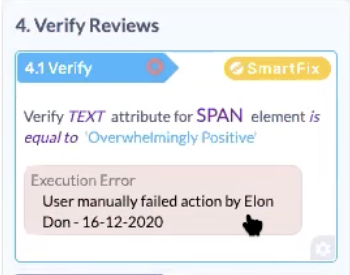

When you click into the step details, you will see an indication that the test was manually failed.

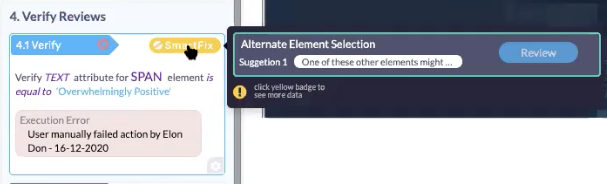

NOTE:In certain situations, when you perform this “Force Fail,” the product may provide a SmartFix suggestion to recommend how this test can be optimized for future executions. For example, it may give you some alternate elements to select on the page. If you accept that SmartFix suggestion (e.g. selecting a different element on the page), then in future executions, the test will check for that instead. These SmartFix suggestions give you another peek into how Functionize's machine learning continually looks for ways to optimize your tests.

Summary

Don't forget to include verification steps in your tests! Otherwise, there's a chance our self-heal functionality may get it wrong, leading to false negatives. This is because the machine learning algorithm is doing its best to understand how your application works and what the intent of your test is. When it gets this wrong, “Force Fail” is a way to signal to the algorithm to relearn. If you now add more verifications, you make it more likely that the model will get it right in the future. Once you use the “Force Fail” feature and then modify your test with proper verifications, the machine learning algorithm will be able to better run your tests in the future.